How To Scrape Amazon Reviews

Content

How To Scrape Amazon Product Data: Names, Pricing, Asin, Etc.

Anameis outlined for Spider, which must be distinctive throughout all of the Spiders, because scrapy searches for Spiders utilizing its name. allowed_domainsis initialized with amazon.com as we are going to scrap data from this area and start_urls are pointing to the specific pages of the same area.

Amazon And Web Scraping

This will let you see your project in action, highlighting every step that it takes. Next, we’ll create a conditional command to let ParseHub know that we only want the names of the administrators extracted from the listing. To do that, click on the plus signal subsequent to selection1 (we’ve renamed this director), then choose Advanced and Conditional. If you’re interested in scraping extra Amazon knowledge, examine our in-depth information on scraping all types of Amazon knowledge free of charge. However, for this project, we'll specifically give attention to scraping Amazon evaluations.

Scraping Amazon Product Data

What is very unique about dataminer is that it has a lot of feature in comparison with different extension. Octoparse is another internet scraping device with a desktop software (Windows solely, sorry MacOS users ????♂️ ). Teams with out builders that want to rapidly scrape web sites and remodel the information. Historically that they had a self-serve visible net scraping software.

Scraping Amazon Results Page

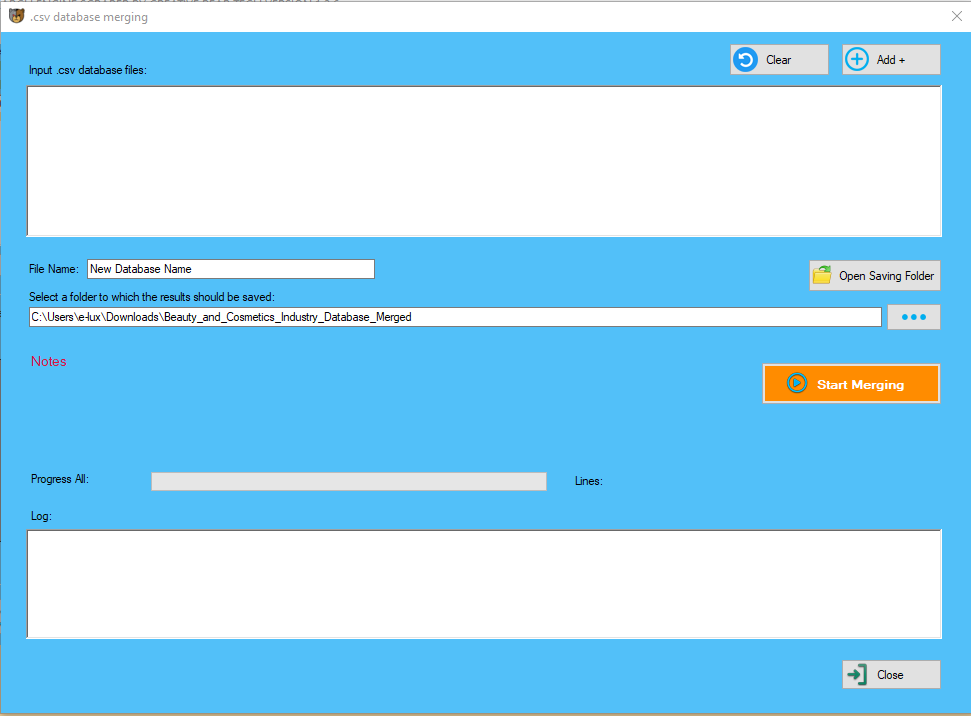

Beauty Products & Cosmetics Shops Email List and B2B Marketing Listhttps://t.co/EvfYHo4yj2

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

Our Beauty Industry Marketing List currently contains in excess of 300,000 business records. pic.twitter.com/X8F4RJOt4M

In this post we are going to see the totally different present net scraping tools obtainable, each commercial and open-supply. User can extract knowledge with Parsehub from anywhere on a website and will get structured scraped data. Once the user opens ParseHub, click onCreate New Project, put in the URL of the online page you need to scrape. They supply scrapy internet hosting, meaning you'll be able to simply deploy your scrapy spiders to their cloud. Goutte provides Yellow Pages Business Directory Scraper a nice API to crawl web sites and extract information from the HTML/XML responses.

Running And Exporting Your Project

It is a default class like Items which scrapy generates for users. Apart from producer supplied product information, Amazon also stores a wealth of consumer offered information within the form of product evaluations and rankings. Using WebHarvy you'll be able to simply extract each product evaluate in addition to reviewer’s details as proven within the following video. Watch the following video which exhibits how many of the above knowledge may be extracted from Amazon product listings using WebHarvy. You may have to repeat this step with the second review to fully prepare the scraper. You at the moment are able to scrape Amazon knowledge to your coronary heart's want. On the left sidebar, click on the "Get Data" button and click on on the "Run" button to run your scrape. For longer projects, we suggest doing a Test Run to verify that your information will be formatted correctly. By default, ParseHub will extract the textual content and URL from this link, so expand your new next_button selection and remove these 2 commands. It has many helpful features, as traditional you possibly can choose elements with a simple point & click on interface. You can export the information in many format, CSV, JSON and even with a REST API. Portia is one other nice open supply project from ScrapingHub. This will give us an option to create a new template on condition that the layout of the product page is completely different than the record view that we started with. We’ll name this template details and click on Create New Template. This command allows you to to pick out information related to the merchandise (it’s called relative select for a purpose, duh). As quickly as we select the movie title, ParseHub will immediate us to click on on the information associated with an arrow. ParseHub work on extraction data from the web site into the native database based mostly on customer requirement. To use Parsehub users do not need basic programming information to start out a friendly consumer-interface with very excessive-quality person support. It's a visible abstraction layer on top of the great Scrapy framework. For massive websites like Amazon or Ebay, you can scrape the search results with a single click on, without having to manually click and choose the factor you want. One of the beauty of dataminer is that there is a public recipe list you could search to hurry up your scraping. A recipe is an inventory of steps and guidelines to scrape an internet site. Simplescraper is a very simple to make use of Chrome extension to quickly extract knowledge from an internet site. ParseHub will now automatically create this new template and render the Amazon product page for the first product on the record. You will discover that ParseHub is now extracting the product name and URL for each product. ProductsHigh Volume API Gather huge quantities of accurate SEO knowledge. \u201cWeb scraping,\u201d also called crawling or spidering, is the automated gathering of knowledge from someone else's website.

With an internet scraper, we will scrape evaluations and ratings from any product or product category from Amazon. You can scrape information from Amazon to run all types of study. You can use the navigate tool to leap to a different page (see our interactive navigation tutorial in the extension for the details). You might probably scrape instantly from the site Google Maps Scraper or from one of many grocery supply services. Likely they have a script that runs within the background as a “employee” and dumps data back on zip code / store location and availability of items. When you run a scraping project from one IP address, your target web site can easily clock it and block your IP. Residential scraping proxies enable you to conduct your market research with none worries. Let’s say that all we'd like from the product particulars section are the names of administrators. Diffbot can take care of this with their automatic extraction API. DiffBot provides multiple structured APIs that returned structured information of products/article/discussion webpages.

- Unlike other internet scrapers that solely scrape content material with simple HTML structure, Octoparse can deal with each static and dynamic websites with AJAX, JavaScript, cookies and etc.

- Web knowledge extraction consists of however not restricted to social media, e-commerce, marketing, actual estate itemizing and lots of others.

- As a result, you can achieve automated inventories tracking, worth monitoring and leads producing within fingertips.

- You can create a scraping task to extract data from a posh web site such as a website that requires login and pagination.

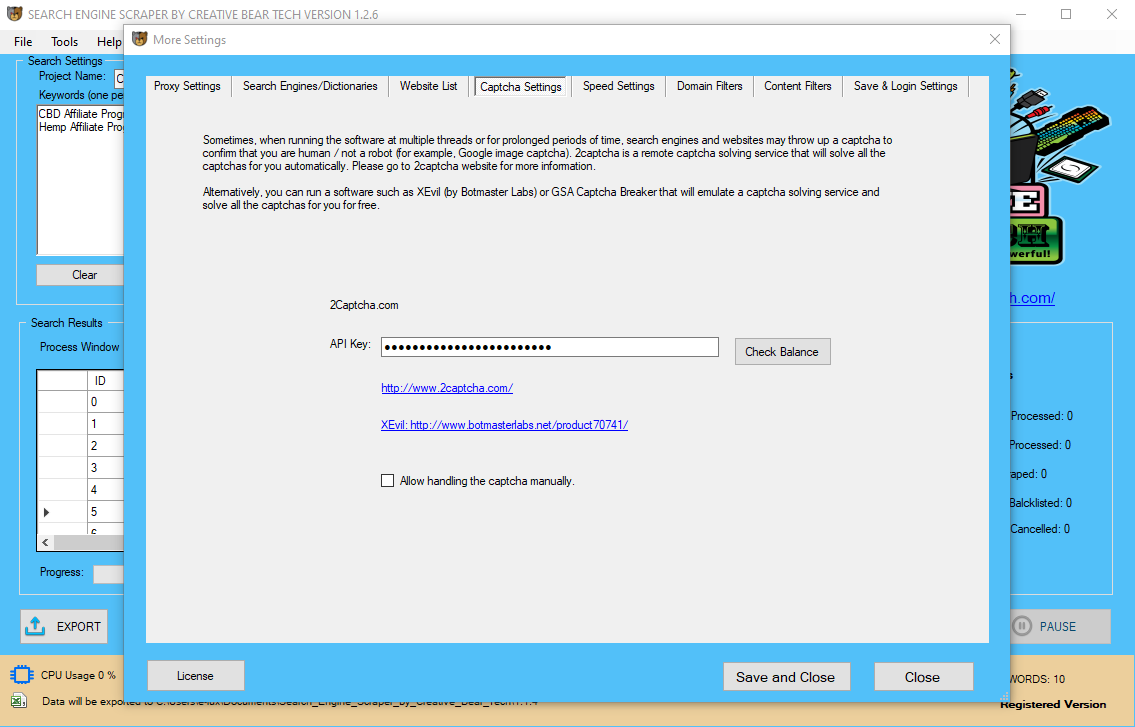

This Amazon scraper is simple to use and returns the requested information as JSON objects. If you reply sure to this question, then this section is very important so that you can learn. The scraping itself occurs on Parsehub servers, you solely have to create the instruction throughout the app. ScrapingHub supply lots of builders tools for net scraping. It is aimed toward tech companies and particular person builders. The web is the biggest info storehouse that man has ever created. Scrapinghub provides fast and reliable internet scraping services for converting websites into actionable information. Puppeteer is a Node-based mostly headless browser automation device often used to retrieve knowledge from websites that require JavaScript for displaying content material. Zenscrape is a trouble-free API that gives lightning-quick and straightforward-to-use capabilities for extracting massive amounts of data from online resources. Try eScraper to Scrape Amazon Reviews it has a chance at no cost scrape, so you possibly can choose. Dexi.io is an intelligent, automated web extraction software program that applies subtle robot know-how to supply users with fast and efficient results. Diffbot differs from most different net scrapers as a result of it uses laptop imaginative and prescient and machine studying applied sciences (as an alternative of HTML parsing) to reap information from net pages. ScrapeSimple supplies a service that creates and maintains net scrapers in accordance with the shoppers’ directions. Each distinctive net page (URL) that is processed and extracted by ScrapeStorm counts as an API name. Overall FMiner is a really good visual internet scraping software. Meaning it allows to create scrapy spiders without a single line of code, with a visible software. Dataminer is one of the most famous Chrome extension for webscraping (186k installation and counting). Although scraping is ubiquitous, it isn't clearly authorized. A variety of legal guidelines could apply to unauthorized scraping, including contract, copyright and trespass to chattels legal guidelines. “Web scraping,” additionally referred to as crawling or spidering, is the automated gathering of data from someone else's web site.  My own experiments with scraping Amazon and Google have been stopped within the water by their anti-bot site visitors controls. Chrome extensions run in a severely restricted setting. While this is arguably good for safety, it prevents us from building some of the powerful instruments we can construct in Firefox. We do plan to eventually release as a standalone app with no browser dependency. With ParseHub, all of the tools easily mix, so you do not want that distinction. Winautomationis a home windows net scraping tool that enables you to automate desktop and web-based duties. Scrapehubprovides a cloud-primarily based internet scraping platform that allows developers to deploy and scale their crawlers on demand. We've invested very closely in constructing out a solid infrastructure for extracting information. If you target your scraping to further your individual business, and impinge on someone else's enterprise model, your in water that is presently murky. Once we do that with the primary film, we’ll do this once more with the second to be sure that the rest of the knowledge is collected as nicely. Clicking on Start project on this URL will open the window within the integrated browser in ParseHub itself which is a very handy feature. Before we get into action, let’s get two things covered. First, make sure you’re using dependable scraping proxies as they'll definitely make or break your project.

My own experiments with scraping Amazon and Google have been stopped within the water by their anti-bot site visitors controls. Chrome extensions run in a severely restricted setting. While this is arguably good for safety, it prevents us from building some of the powerful instruments we can construct in Firefox. We do plan to eventually release as a standalone app with no browser dependency. With ParseHub, all of the tools easily mix, so you do not want that distinction. Winautomationis a home windows net scraping tool that enables you to automate desktop and web-based duties. Scrapehubprovides a cloud-primarily based internet scraping platform that allows developers to deploy and scale their crawlers on demand. We've invested very closely in constructing out a solid infrastructure for extracting information. If you target your scraping to further your individual business, and impinge on someone else's enterprise model, your in water that is presently murky. Once we do that with the primary film, we’ll do this once more with the second to be sure that the rest of the knowledge is collected as nicely. Clicking on Start project on this URL will open the window within the integrated browser in ParseHub itself which is a very handy feature. Before we get into action, let’s get two things covered. First, make sure you’re using dependable scraping proxies as they'll definitely make or break your project.  Now use the PLUS(+) button subsequent to the product selection and choose the “Click” command. A pop-up will seem asking you if this link is a “subsequent web page” button. Click “No” and next to Create New Template input a new template name, in this case, we are going to use product_page. Repeat steps 4 through 6 to also extract the product star rating, the variety of evaluations and product image. Open ParseHub, click on on “New Project” and use the URL from Amazon’s end result page. You get clocked, your IP blocked and you'll wave your analysis goodbye. If you need to make internet scraping easy, you possibly can’t go wrong with using ParseHub. It’s not only perfect for absolute newbies, it’s additionally the best choice for individuals who need issues carried out quick and straightforward. Octoparse is a sturdy internet scraping device which additionally provides web scraping service for enterprise house owners and Enterprise. I am trying to read all the feedback for a given product , that is to each be taught python and likewise for a project,to simplify my task I chose a product randomly to code. We’ll use the same Click command to pick out the primary piece of information given (on this case, Actors). This will highlight the remainder of the classes as properly, so we’ll choose the second too so ParseHub would know to search for administrators on this particular section. Moving on, we’ll need to collect some extra particular info from individual product pages. To do this, once once more, we’ll choose the Click command and select the first movie title, The Addams Family. However now, when requested if it’s a next web page button, we’ll click on on No. Their answer is kind of costly with the bottom plan starting at $299 per 30 days. You also should take care of the difficulty of always upgrading and updating your scraper as they make modifications to their site layout and anti-bot system to interrupt present scrapers. Captchas and IP blocks are additionally a major problem, and Amazon uses them lots after a couple of pages of scraps. This is even more important to sellers and distributors on Amazon.

Now use the PLUS(+) button subsequent to the product selection and choose the “Click” command. A pop-up will seem asking you if this link is a “subsequent web page” button. Click “No” and next to Create New Template input a new template name, in this case, we are going to use product_page. Repeat steps 4 through 6 to also extract the product star rating, the variety of evaluations and product image. Open ParseHub, click on on “New Project” and use the URL from Amazon’s end result page. You get clocked, your IP blocked and you'll wave your analysis goodbye. If you need to make internet scraping easy, you possibly can’t go wrong with using ParseHub. It’s not only perfect for absolute newbies, it’s additionally the best choice for individuals who need issues carried out quick and straightforward. Octoparse is a sturdy internet scraping device which additionally provides web scraping service for enterprise house owners and Enterprise. I am trying to read all the feedback for a given product , that is to each be taught python and likewise for a project,to simplify my task I chose a product randomly to code. We’ll use the same Click command to pick out the primary piece of information given (on this case, Actors). This will highlight the remainder of the classes as properly, so we’ll choose the second too so ParseHub would know to search for administrators on this particular section. Moving on, we’ll need to collect some extra particular info from individual product pages. To do this, once once more, we’ll choose the Click command and select the first movie title, The Addams Family. However now, when requested if it’s a next web page button, we’ll click on on No. Their answer is kind of costly with the bottom plan starting at $299 per 30 days. You also should take care of the difficulty of always upgrading and updating your scraper as they make modifications to their site layout and anti-bot system to interrupt present scrapers. Captchas and IP blocks are additionally a major problem, and Amazon uses them lots after a couple of pages of scraps. This is even more important to sellers and distributors on Amazon.

Blockchain and Cryptocurrency Email List for B2B Marketinghttps://t.co/FcfdYmSDWG

— Creative Bear Tech (@CreativeBearTec) June 16, 2020

Our Database of All Cryptocurrency Sites contains the websites, emails, addresses, phone numbers and social media links of practically all cryptocurrency sites including ICO, news sites. pic.twitter.com/WeHHpGCpcF

Amazon is not like another web site you flex your web scraping muscle tissue and skills on – it is backed by a huge and experienced technical team, much more skilled than you might be. Before letting ParseHub free, we’d always suggest to test it first to see if it’s functioning appropriately. To achieve this, click on Get Data on the left hand aspect, after which select Test Run. For companies, the evaluations dropped by patrons of his merchandise might help him fantastic-tune his choice and know what the users of the product really like and dislike. When I say reviews, I don’t imply star scores but precise feedback which can be utilized for sentimental and different types of analysis. Sellers can use it for competitive analysis and use it to monitor their competitors’ product rating and costs. To open the project in your account, open ParseHub, go to My Projects, click on Import Project and select the file.Note that this project will work on the Etsy solely. A person with fundamental scraping abilities will take a smart transfer through the use of this brand-new feature that permits him/her to turn internet pages into some structured knowledge instantly. The Task Template Mode solely takes about 6.5 seconds to drag down the information behind one web page and allows you to download the info to Excel. ScrapingHub is among the most nicely-recognized internet scraping company. They have lots of product round internet scraping, each open-source and industrial. There are the company behind the Scrapy framework and Portia. Create 3 scraping task for you at no cost when you purchase the Business Plan (Annually) for the primary time. Create 2 scraping task for you at no cost if you purchase the Premium Plan (Annually) for the first time. Create 1 scraping task for you at no cost if you buy the Professional Plan (Annually) for the primary time. Using the Relative Select command, click on the reviewer’s name and the score under it. An arrow will seem to point out the affiliation you’re creating. For this task, we are going to use ParseHub, an extremely powerful web scraper. To make things even higher, ParseHub is free to obtain.

Global Hemp Industry Database and CBD Shops B2B Business Data List with Emails https://t.co/nqcFYYyoWl pic.twitter.com/APybGxN9QC

— Creative Bear Tech (@CreativeBearTec) June 16, 2020